Anticipating the Privacy and Informed Consent issues of the Neurotechnology Era

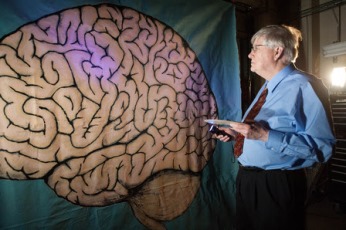

In conjunction with the new National Geographic program “Breakthrough: Decoding the Brain,” coming this Sunday, November 15, at 9 pm ET, I was asked to provide my perspective on a very provocative question:

In conjunction with the new National Geographic program “Breakthrough: Decoding the Brain,” coming this Sunday, November 15, at 9 pm ET, I was asked to provide my perspective on a very provocative question:

What if scientists were able to implant or erase memories? For some, like those suffering from PTSD this could be life-changing, or do you think this is scientific innovation gone too far?

The question is very timely. 30,000+ scientists and professionals gathered for the annual Society for Neuroscience conference this past October in Chicago, proving that more people than ever are working to improve our understanding of the human brain, and to discover ways and technologies to enhance its health and performance.

Having just edited a major report on “pervasive neurotechnologies,” and the ways in which applied neuroscience can help improve health, productivity, and more, my first reaction is to imagine all the great possibilities if we were able to “implant or erase memories,” such as helping deal with mental health issues, or accelerating learning (what if you could “implant” the capacities and vocabulary to speak fluent Mandarin?).

Coincidentally, though, I received the request above while reading the article Medical Research: The Dangers to the Human Subjects, by Marcia Angell, at The New York Review of Books. She reminds us that:

Given the American faith in medical advances (the NIH is largely exempt from the current disillusionment with government), it is easy to forget that clinical trials can be risky business. They raise formidable ethical problems since researchers are responsible both for protecting human subjects and for advancing the interests of science. It would be good if those dual responsibilities coincided, but often they don’t. On the contrary, there is an inherent tension between the search for scientific answers and concern for the rights and welfare of human subjects.

From first-hand experience, speaking about brain health and innovation topics to a variety of audiences, I know that many people have concerns about privacy loss and about diminished capacity for true “informed concern,” given the growing knowledge gap between experts and everyone else.

From first-hand experience, speaking about brain health and innovation topics to a variety of audiences, I know that many people have concerns about privacy loss and about diminished capacity for true “informed concern,” given the growing knowledge gap between experts and everyone else.

Here’s an example. The US Army, automotive companies, medical and insurance companies are developing neurosensor-based systems to improve driving safety, employing car-based neural detection devices (via eye-tracking, EEG, or other) to monitor driver alertness and take preventative measures, for example with driver stimulation or vehicle autopilot/ shutdown systems.

Sounds great, right?

But, what if that data is shared with your insurance company? Or, with the police, in real-time? Doing so could certainly help reduce accidents due to sleepiness or drinking, but also create a Big Brother-type society most of us wouldn’t want to live in.

To continue with the driving example: Imagine that, despite all safety precautions, you end up having an accident. A horrible accident. One that leaves you so much shocked, that you never want to drive again.

Would you want to erase that memory?

Probably. But consider potential side-effects. First, given how our brains work (“cells that fire together, wire together), more likely than not you would also weaken other associated memories. Perhaps you would forget much about the people you were driving with, even breaking the love you felt for your spouse, who was in the car too. Second, you would be less likely to learn from that experience, as bad as it was, and less likely to drive more carefully next time.

Instead of erasing the memory, you might want to consider alternatives. What if going through a few weeks of virtual reality-assisted cognitive therapy helps you manage the anxiety and the trauma, and equips you with a lifelong coping skill?

Who should make the decision, and based on what level of knowledge?

We live in exciting, transformational times, but we need to be proactive about anticipating and mitigating potential issues, aligning scientific innovation to the interests of individuals living in the here and now. We need to step back for a second and ask, How do we maximize the benefits and minimize the risks? That’s a longer conversation to have, but I believe a critical place to start would to 1) establish privacy standards for gathering, sharing and usage of brain activity data, and by 2) strengthen informed consent policies, both in research and in practice, to prioritize the interests and the brain literacy of every proud brain owner.