Will these five NeuroRights help harness emerging neurotechnologies for the common good?

__

__

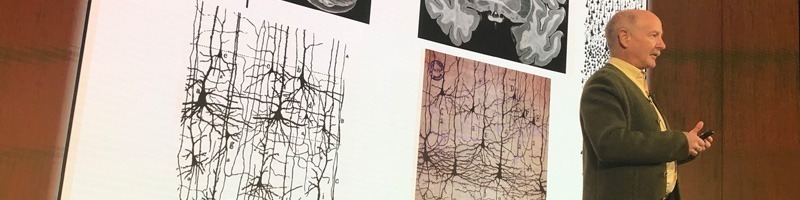

Data for Good: Biological Scientist, DSI Member Rafael Yuste on the Ethical Development of Neurotechnology (Columbia University release):

“Brain-computer interfaces may soon have the power to decode people’s thoughts and interfere with their mental activity. Even now the interfaces, or BCIs, which link brains directly to digital networks, are helping brain-impaired patients and amputees perform simple motor tasks such as moving a cursor, controlling a motorized wheelchair, or directing a robotic arm. And noninvasive BCI’s that can understand words we want to type and place them onto screens are being developed.

But in the wrong hands, BCIs could be used to decode private thoughts, interfere with free will, and profoundly alter human nature.

To counter that possibility, Columbia University professor of biological sciences and Data Science Institute member Rafael Yuste founded the NeuroRights Initiative, which advocates for the responsible and ethical development of neurotechnology. The initiative puts forth ethical codes and human rights directives that protect people from potentially harmful neurotechnologies by ensuring the benign development of brain-computer interfaces and related neurotechnologies.”

To counter that possibility, Columbia University professor of biological sciences and Data Science Institute member Rafael Yuste founded the NeuroRights Initiative, which advocates for the responsible and ethical development of neurotechnology. The initiative puts forth ethical codes and human rights directives that protect people from potentially harmful neurotechnologies by ensuring the benign development of brain-computer interfaces and related neurotechnologies.”

The five NeuroRights are:

The Right to Personal Identity: Boundaries must be developed to prohibit technology from disrupting the sense of self or blurring the line between a person’s internal processing and external technological inputs;

The Right to Free Will: People should control their own decision making, without manipulation from external neurotechnologies;

The Right to Mental Privacy: Data obtained from measuring neural activity should be kept private, and the sale, commercial transfer, and use of neural data should be strictly regulated;

The Right to Equal Access to Mental Augmentation: Global guidelines should be established to ensure the fair and just access to mental-enhancement neurotechnologies that could further increase societal and economic disparities; and

The Right to Protection from Algorithmic Bias: Countermeasures to combat bias should be the norm for machine learning when used within neurotechnology devices, and algorithm design should include input from user groups to counter bias.

News in Context:

- A call to action: We need the right incentives to guide ethical innovation in neurotech and healthcare

- Let’s anticipate the potential misuse of neurological data to minimize the risks–and maximize the benefits

- New report: Empowering 8 Billion Minds via Ethical Development and Adoption of Neurotechnologies

- Growing debate about the ethics and regulation of direct-to-consumer transcranial direct current stimulation (tDCS)

- First, do no harm? Common anticholinergic meds seen to increase dementia risk

- The FDA creates new Digital Health unit to reimagine regulatory paths in the age of scalable, AI-enhanced innovation

- Five reasons the future of brain enhancement is digital, pervasive and (hopefully) bright

How to address privacy, ethical and regulatory issues: Examples in cognitive enhancement, depression and ADHD from SharpBrains